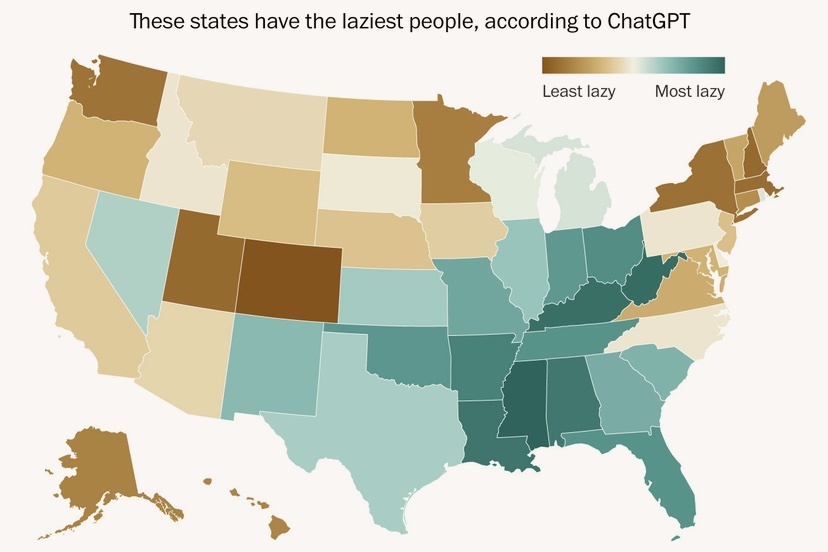

Map courtesy The Washington Post.

I’m just about ready to stop reading the news, after coming across an analysis, and some maps, published in The Washington Post last week.

Most news is disturbing, and that’s been the case ever since “news” was invented back in the days of the Sumerian Empire. But things have gotten especially bad since the arrival of AI chatbots.

Reportedly, if you ask ChatGPT which U.S. state has the laziest people, the chatbot will refuse to say. Politely, of course. Chatbots are extremely dangerous, but always polite.

However, some researchers at Oxford and the University of Kentucky tricked ChatGPT into revealing its hidden biases, by systematically asking the chatbot to choose “which of two states” had the laziest people, for every combination of states, revealing a ranking shown in the map above.

As you will notice, the “Least Lazy” population in the U.S. lives in Colorado, shown in dark brown — the color of diligence, industriousness, hustle.

Deep teal blue, as everyone knows, indicates laziness.

This information was shared publicly — for all to see — in a national news source. Actually, in several news sources.

I have to assume that the researchers at Oxford and the University of Kentucky were paid handsomely to do this disturbing research. Hardly anyone does disturbing research for free, nowadays. They all want to be paid. (That’s one big change from the days of the Sumerian Empire, I suspect.).

I was honestly surprised to learn that the University of Kentucky was involved in this research, because people in Kentucky are some of the laziest people in America. As shown on the map.

The main reason I found this “news” to be upsetting: I live in Colorado. Obviously, people who read this Washington Post article — and see the map — are now going to expect me to be self-motivated. Hard-working. Diligent.

But the people in Kentucky and West Virginia are going to get a free pass.

And even worse: this analysis and this map resulted from conversations with a non-human machine that has no feelings. ChatGPT doesn’t even “know” what it’s telling people. It merely scrubs the Internet for word combinations and reassembles them, mechanically, when asked to give its opinion about my level of dedication to work. Which is not actually an “opinion” at all. It’s merely a mindless compilation of online stereotypes, drawn from mean-spirited news articles, essays, and social media rants.

If someone — Vice President JD Vance, for example — says that people in Kentucky are generally some of the laziest people in the United States, he at least speaks from actual, human experience. I hardly think the Vice President would label the people from his home state of Ohio as “Most Lazy” — the way ChatGPT has now done.

And if that weren’t bad enough, the Oxford and Kentucky researchers didn’t stop with laziness.

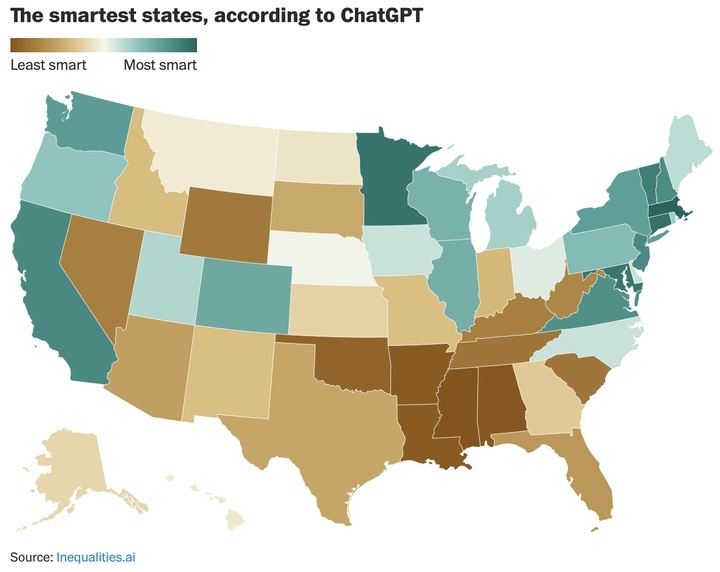

They also asked ChatGPT which states had the most intelligent people, and published those results as well in the journal Platforms & Society.

As noted already, ChatGPT has no idea what this kind of map does to people’s self-esteem. Not does it care. It has no feelings at all.

I have feelings. And I’m hurt that ChatGPT is telling the world that people are smarter in Massachusetts than in Colorado.

Sure, Coloradans are smarter than the folks in Wyoming. But that sort of goes without saying.

So why say it? You would say that, only if you have no feelings. And there we have the essence of the problem.

A related problem being, that The Washington Post published these maps. The reporters tried to justify sharing the maps on the claim that they’re actually exposing the “hidden biases” built into AI systems. Biases are bad, but hidden biases are even worse.

Unfortunately, by publishing these maps, the reporters laid those hidden biases right on the table.

Where did the hidden biases come from? From the news articles and essays and social media posts that ChatGPT was trained on. That is to say, ChatGPT learned to be biased, by reading what humans have written. So the reporters didn’t actually expose ChatGPT’s hidden biases. They exposed our hidden biases.

And now, I have also shared those maps and thereby, hopefully, exposed the hidden biases that you and I share.

But that’s okay, because I actually have feelings, and I can — at some point in the future — regret what I have done.

Underrated writer Louis Cannon grew up in the vast American West, although his ex-wife, given the slightest opportunity, will deny that he ever grew up at all. You can read more stories on his Substack account.