In a bold move to dominate the future of artificial intelligence, Beijing is making AI education mandatory for all students — including elementary schoolers as young as six. Starting this fall, schools must provide at least eight hours of AI instruction per year, either as a standalone course or integrated into subjects like science and IT.

Why is China Doing This?

— from the article “From the Classroom to the Future, Beijing Makes AI Learning Mandatory” on EducationJournalist.com, March 2025.

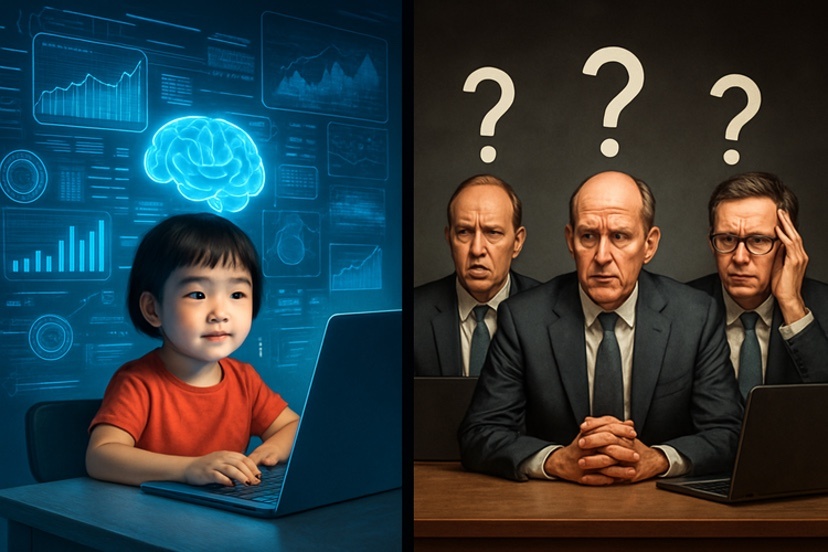

Before we get too excited — and admittedly, it’s easy to get too excited these days — the statement that “Beijing is making AI education mandatory” apparently refers to the “Beijing Municipal Education Commission”… not “the Communist government in Beijing”. The Communist government has better things to get us excited about, than whether Chinese six-year-olds are more adept at using AI than corporate CEOs in the U.S.

For some reason, a lot of Asian news outlets were covering this story back in March. For example, an article I found on EducationJournalist.com, quoted above, was found on a website based in Bangalore, India. The only prominent U.S. media outlet that reported this story in March seems to be Business Insider. But I have a feeling that most Business Insider articles are now being written by AI, so maybe that doesn’t really count.

In fact, I found myself wondering if the EducationJournalist.com article… the article that sparked my interest in this topic… was written by AI. So I naturally asked my favorite AI app, Claude.com, if that March 2025 article was likely AI-written.

Claude didn’t pull any punches. From his response:

Based on my analysis of the article, there are several indicators suggesting it was likely written by AI:

Strong AI Writing Indicators:

- Generic structure and formatting – The article follows a very formulaic pattern with predictable headings like “Why is China Doing This?” and “What’s Next?” that are common in AI-generated content.

- Superficial analysis – The content lacks depth and original insights, instead presenting basic information in a simplified manner typical of AI writing.

- Repetitive language patterns – Phrases like “AI is the future” and repeated emphasis on China’s “dominance” and “competitive edge” show the repetitive nature common in AI text generation.

- Bullet point reliance – Heavy use of bullet points and structured lists rather than flowing narrative prose.

- Vague sourcing – The article makes specific claims about policies and statistics but provides no citations or direct quotes from officials, which is unusual for legitimate journalism.

- Sensationalized tone – The dramatic language (“bold move,” “shook the industry”) combined with superficial coverage suggests AI content optimized for engagement rather than serious reporting.

- Generic ending – The conclusion with rhetorical questions (“Will other countries step up to compete?”) is a common AI writing technique.

Claude’s response was, of course, written by AI. So it features generic structure, superficial analysis, repetitive language patterns, bullet point reliance, vague sourcing, sensationalized tone and a generic ending.

Only some of those patterns are found in my own humor columns, like this one. The sensationalized tone, for example.

Speaking as a human, I also sometimes conclude with a generic ending, like “Will other countries step up to compete?”

Which is exactly what I was thinking about as I researched this topic. Especially, will the U.S. step up to compete? Do we really want our kindergartners playing with blocks and Legos and pretending to make pizzas out of cardboard? When they could be programming ChatGPT to solve complex engineering problems or to create ‘deep fake’ political memes?

From whatever version of AI was responsible for the EducationJournalist.com article:

With AI reshaping industries — from automation to healthcare — early education in AI will give China a competitive edge in the global AI race. The goal is to develop a “teacher-student-machine” learning model that not only teaches AI but also integrates AI ethics, ensuring responsible innovation.

Why, exactly, anyone would be racing to promote a system that features generic structure, superficial analysis, repetitive language patterns, bullet point reliance, vague sourcing, sensationalized tone and generic endings, is not immediately clear.

I think we already have plenty of those features in our U.S. businesses and schools.

But I appreciate the mention of “ethics”. Something we do not have plenty of.

Underrated writer Louis Cannon grew up in the vast American West, although his ex-wife, given the slightest opportunity, will deny that he ever grew up at all. You can read more stories on his Substack account.